Here's my quick attempt at an Iteratee-based version. Even after this edit his answer is obviously much better than mine, and doesn't seem to run out of memory in the same way. NOTE: I've edited my code slightly to reflect the advice in Duncan Coutts's answer. Monadic lists/ ListT come from the "List" package on hackage (transformers' and mtl's ListT are broken and also don't come with useful functions like takeWhile) I used the "pureMD5" package here because "Crypto" doesn't seem to offer a "streaming" md5 implementation. Repeat () - this makes the lines below loopĬhunk <- liftIO $ BS.hGet handle chunkSize Handle <- liftIO $ openFile filename ReadMode StrictReadFileChunks chunkSize filename =

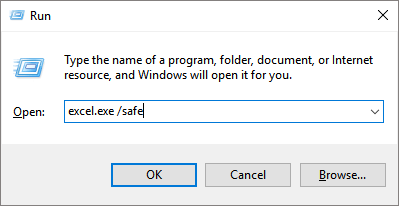

StrictReadFileChunks :: Int -> FilePath -> ListT IO BS.ByteString Import Prelude hiding (repeat, takeWhile)įmap (encode. Import System.IO (IOMode(ReadMode), openFile, hClose) Import (repeat, takeWhile, foldlL) - List My favorite tool for this job is monadic lists. You can use a tool such as Iteratee to help you structure strict IO code. This resource pattern is directly supported by .Īs dons suggested, you should use strict IO. This "withBlah" pattern is very common when dealing with expensive resources. It guarantees that the file gets closed when the body returns. The withFile function opens the file and passes the file handle to the body function. fileLine path = withFile path ReadMode $ \hnd -> do The main thing that is going on here is the line: mapM_ (\path -> putStrLn. fileLine path = BS.ByteString -> StringīTW, I happen to be using a different md5 hash lib, the difference is not significant. So instead of opening all files and then processing the content, you open one file and print one line of output at a time.

You don't need to use any special way of doing IO, you just need to change the order in which you do things. ^ Just gets the paths to all the files in the given directory.Īre there any ideas on how I could solve this problem?Įdit: I have plenty of files that don't fit into RAM, so I am not looking for a solution that reads the entire file into memory at once. Hex = concatMap (\x -> printf "%0.2x" (toInteger x)) Let getFileLine path = liftM (\c -> (hex $ hash $ BS.unpack c) " " path) (BS.readFile path) It seems Haskell's laziness is causing it not to close files, even after its corresponding line of output has been completed. All is fine and dandy except if the current directory has a very large number of files, in which case I get an error like: : : openBinaryFile: resource exhausted (Too many open files) I've written a small Haskell program to print the MD5 checksums of all files in the current directory (searched recursively).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed